The Rise of Open-Source AI: Why More Users Are Ditching Paid Subscriptions

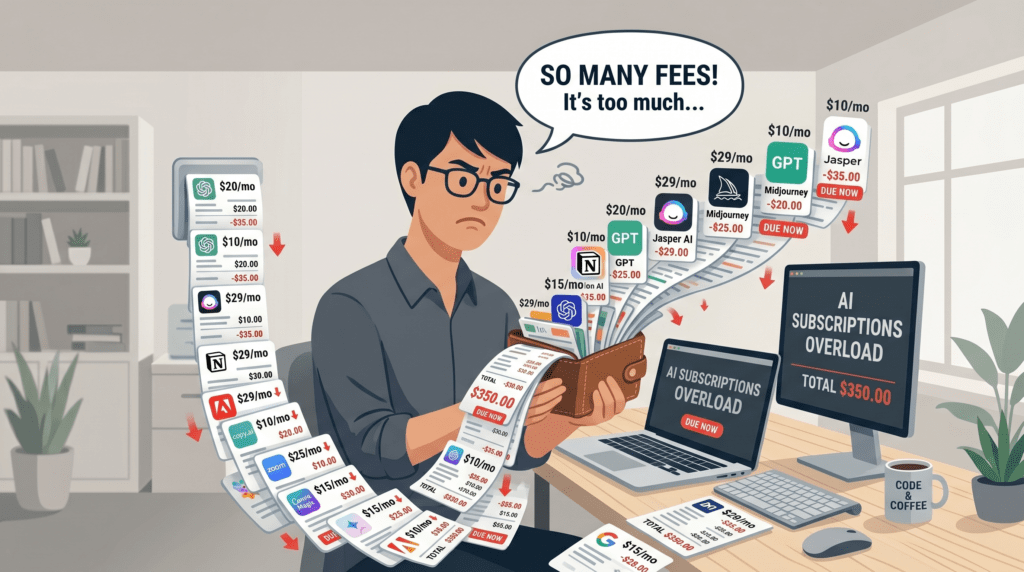

For a while, the appeal of paid AI tools was simple: pay once, get access to something genuinely useful. But that calculus has shifted. What started as one or two subscriptions has quietly become a stack a coding assistant here, a research tool there, a chat interface somewhere else and the monthly total is no longer trivial.

A growing number of developers, researchers, students, and privacy-conscious users are asking a reasonable question: do I actually need to pay for all of this?

The answer, increasingly, is no. Open-source and self-hosted AI tools have matured to the point where they offer real, practical alternatives to many paid platforms. This article looks at what is driving that shift, which tools are worth your time, and what trade-offs you should understand before making the switch.

Why Paid AI Subscriptions Are Starting to Frustrate People

The Cost Keeps Stacking

It used to be one subscription. Now it is four. A research tool, a writing assistant, a coding agent, a document tool — each with its own monthly fee, each justified on its own terms. Together they become a real expense, especially for independent creators, students, or small teams without a corporate card absorbing the cost.

Usage Caps Break Your Workflow

Most paid tiers come with limits. Rate caps, message quotas, context window restrictions — all things you only notice when you are deep in a task and suddenly hit a wall. Upgrading to remove those limits often means moving to a more expensive plan, and the cycle continues.

Privacy Remains a Genuine Concern

Every query you send to a cloud-based AI is a query leaving your machine. For personal research, sensitive business material, or anything involving confidential data, that is not a comfortable position. Even platforms with thoughtful privacy policies are still cloud services by nature.

Lock-In Is a Real Risk

Your workflows, prompts, integrations, and habits are built around a specific platform. If that platform changes its pricing, deprecates a feature, or simply disappears, the disruption is yours to absorb. Vendor dependency is a technical risk that does not get discussed enough.

Cloud Dependence Is a Structural Limitation

Offline work, slow connections, travel, organizational network restrictions — cloud-only tools fail in all of these situations. A workflow that depends on someone else’s servers is, by definition, fragile.

Why Open-Source AI Is More Practical Than It Used to Be

A few years ago, running a capable language model locally required serious technical knowledge and expensive hardware. That is no longer the case.

Consumer GPUs can now run surprisingly capable models. Apple Silicon has made on-device AI genuinely fast. Installers have become friendlier. And the models themselves, particularly in the open-source ecosystem, have improved significantly in quality and range.

There is also a cultural shift. More users understand what model quantization means, what RAG (retrieval-augmented generation) enables, and why local inference matters for privacy. The tools have met users partway, and users have moved toward the tools.

The result is a growing set of open-source applications that are no longer hobby projects. They are serious, maintained, and increasingly user-friendly alternatives to paid platforms.

The Best Open-Source AI Tools Worth Trying

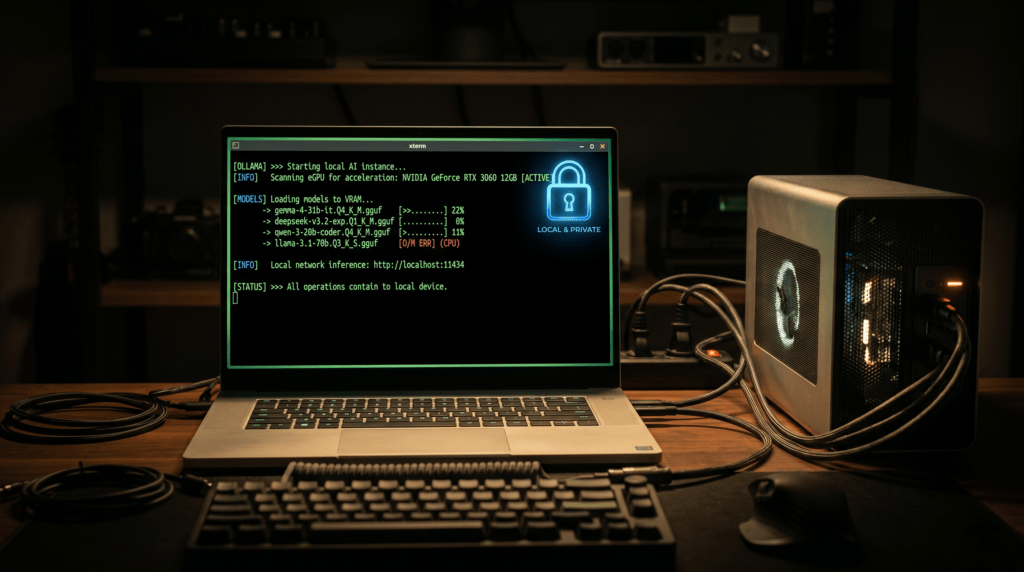

Ollama

If you want to run large language models on your own machine, Ollama is almost certainly where you should start. It handles model downloads, versioning, and local serving through a clean command-line interface, and it integrates with a wide range of front-end apps.

Ollama supports models like Llama 3, Mistral, Gemma, Phi, and many others. Once a model is running locally, it is fast, offline-capable, and entirely private.

What it replaces: The underlying inference layer behind most paid chat and assistant tools.

Best for: Anyone building a local AI stack. Nearly every other tool on this list works better with Ollama underneath it.

Setup complexity: Moderate. The installation is straightforward, but knowing which model to run for which task takes some experimentation.

Perplexica

Perplexica is an open-source alternative to Perplexity, the popular AI search platform. It combines web search with AI-assisted synthesis, allowing you to ask questions and get sourced answers without your queries being logged on someone else’s server.

It is self-hosted, runs against your local or remote LLM, and surfaces citations alongside its responses so you can verify what you are reading.

What it replaces: Perplexity or similar AI search tools that route your research queries through a cloud platform.

Best for: Researchers, journalists, students, and anyone who values source attribution and private browsing in their AI-assisted research.

Setup complexity: Moderate. Requires Docker and some configuration, but the documentation is solid.

OpenCode

OpenCode is a terminal-based coding agent designed for developers who want AI assistance without leaving their workflow and without depending on a proprietary cloud service. It functions similarly to what you might expect from Claude Code-style workflows: contextual code understanding, inline suggestions, and task execution inside your development environment.

Because it works with local or custom model backends, it can be configured to keep your code entirely off external servers.

What it replaces: Paid coding agents and AI-assisted terminal tools.

Best for: Developers who work primarily in the terminal and want a local, configurable coding assistant.

Setup complexity: Intermediate to advanced. Most comfortable for developers already at home in the command line.

Open Notebook

Open Notebook is an open-source alternative to Google’s NotebookLM, built for users who want to have AI-powered conversations with their own documents ,PDFs, notes, research papers, books without uploading them to a third-party service.

You load your documents locally, and the tool lets you ask questions, extract insights, and synthesize content from your own materials. For anyone doing literature reviews, studying, or organizing long-form research, this kind of grounded AI conversation is genuinely useful.

What it replaces: NotebookLM and similar document-intelligence platforms.

Best for: Students, academics, analysts, and researchers working with sensitive or proprietary documents.

Setup complexity: Moderate. Local setup is required, but the payoff in privacy and flexibility is significant for heavy document users.

AnythingLLM

AnythingLLM is arguably the most ambitious tool on this list. It combines local LLM support, retrieval-augmented generation, agents, and a desktop-style interface into one application. You can connect your own documents, run multiple model backends, and build out an AI workspace that is entirely under your control.

It functions like a private, self-hosted version of what many users currently cobble together across multiple paid subscriptions.

What it replaces: A combination of tools including AI chat, document Q&A, and agent workflows that would otherwise require multiple subscriptions.

Best for: Power users, small teams, and anyone who wants one coherent AI environment instead of five separate tools.

Setup complexity: Moderate. The desktop app makes it approachable, though connecting custom models and configuring RAG pipelines requires some investment of time.

LibreChat

LibreChat is a self-hosted chat interface that connects to multiple AI backends — OpenAI, Anthropic, local Ollama models, and others — through a single, unified interface. Rather than maintaining separate tabs and accounts for different AI services, LibreChat lets you manage them all in one place, with full control over your data.

It is particularly appealing for users who want access to multiple models without paying for each platform’s premium tier separately.

What it replaces: Multiple AI chat subscriptions and fragmented browser-tab workflows.

Best for: Privacy-focused users, power users managing multiple model providers, and teams wanting a centralized, auditable AI interface.

Setup complexity: Moderate. Docker-based deployment is well-documented, and there is an active community.

Quick Comparison Table

| Tool | Best Use Case | Replaces | Runs Locally | User Level |

|---|---|---|---|---|

| Ollama | Running local LLMs | Cloud inference layers | Yes | Beginner to Intermediate |

| Perplexica | Private AI web search | Perplexity | Yes via Ollama | Intermediate |

| OpenCode | Terminal coding agent | Cloud coding assistants | Yes | Intermediate to Advanced |

| Open Notebook | Document Q&A and research | NotebookLM | Yes | Intermediate |

| AnythingLLM | All-in-one AI workspace | Multiple subscriptions | Yes | Intermediate to Advanced |

| LibreChat | Multi-model chat hub | Multiple chat platforms | Yes | Intermediate |

Which Tool Should You Start With?

If you are a beginner, start with Ollama and pair it with AnythingLLM. The desktop app gives you a friendly interface while Ollama handles the model layer. You can be running a private AI chat session in under an hour.

If privacy is your primary concern, Ollama plus LibreChat gives you a clean, cloud-free setup where your queries never leave your machine. It is the most direct replacement for a standard AI chat subscription.

If you are a researcher or student, Open Notebook and Perplexica are the most directly useful tools. One helps you work through your own documents. The other helps you search the web without surrendering your queries.

If you are a developer, OpenCode is worth exploring first. It fits into existing terminal workflows and gives you local AI coding assistance without routing your codebase through external servers.

If you want to replace everything at once, AnythingLLM is the most complete option. It requires more setup time, but it consolidates what might otherwise be three or four separate tools into one self-hosted workspace.

The Trade-offs Nobody Should Ignore

Open-source and local AI tools are genuinely good. They are also not a perfect drop-in replacement for every paid service. A few things worth knowing before you commit.

Setup takes real time. Installing Docker, configuring model backends, and wiring tools together is not the same as signing up for a web app. The friction is real, especially for non-technical users.

Hardware matters. Running larger, more capable models requires a decent machine — ideally with a modern GPU or Apple Silicon. Smaller models run on less but are less capable. The gap between local and cloud model quality has narrowed, but it has not closed entirely.

You become the operator. Updates, backups, troubleshooting, and security are now your responsibility. For individual users that is manageable. For teams, it requires more deliberate planning.

Some tools work better together. Most users will end up assembling a small stack rather than finding one tool that does everything. That combination can be powerful, but it also means more to maintain.

Cloud models still lead at the frontier. For tasks requiring the highest reasoning capability or the most current information, frontier cloud models remain ahead. The open-source ecosystem is catching up quickly, but it has not fully arrived.

Final Takeaway

Open-source AI has crossed a meaningful threshold. These tools are no longer experiments for enthusiasts willing to tolerate rough edges. They are practical, maintained, and capable of replacing real paid subscriptions for a wide range of users.

The appeal is not just about saving money, though that is real. It is about running workflows that do not depend on external rate limits, corporate pricing decisions, or cloud availability. It is about keeping sensitive documents and queries off servers you do not control. And it is about building an AI setup that fits your actual needs instead of adapting your needs to fit a platform.

For most users, the path forward is not all-or-nothing. A hybrid approach local tools for private and everyday tasks, cloud tools for complex or frontier use cases makes sense. But the option to shift more of that stack toward open-source alternatives has never been more viable than it is now.

The tools exist. The hardware is ready. The main remaining variable is whether you are willing to invest a few hours of setup for lasting control over your own AI workflow.

Frequently Asked Questions

Q: Can open-source AI tools really match the quality of paid services like ChatGPT or Claude?

For many everyday tasks such as summarization, coding help, document Q&A, and web search, modern open-source models running locally are competitive with paid tiers. For the most complex reasoning or frontier capabilities, cloud models still have an edge, but the gap has narrowed considerably.

Q: Do I need a powerful computer to run these tools?

Not necessarily. Smaller quantized models in the 3 to 7 billion parameter range run acceptably on most modern laptops, including those without dedicated GPUs. Apple Silicon Macs in particular handle local AI inference very well. Larger models benefit from a dedicated GPU.

Q: Is it safe to run AI tools locally in terms of security?

Running AI locally is generally more private than using cloud services because your data does not leave your machine. However, you take on responsibility for keeping your software updated and your local environment secure.

Q: Which of these tools is easiest for someone with no coding experience?

AnythingLLM has the most user-friendly desktop interface and is the most accessible starting point for non-developers. Ollama requires basic command-line comfort but is well-documented and has a large support community.

Q: Can I use these open-source tools for professional or business work?

Yes, and many users do. For anything involving sensitive client data, proprietary research, or confidential communications, local tools offer meaningful privacy advantages over cloud alternatives. Check the license for each tool if redistribution or commercial use is part of your plan.